One of the arguments you hear status-quo educators in DISD make is that we can’t compare outcomes at public schools to those at charter schools, because charters have it easier: the kids aren’t as poor, they come from all over the district, etc. This is an important argument because it seeks to explain away vastly better outcomes for kids coming through the best charter programs vs. kids coming through even the best DISD schools.

I’ve spent some time putting together data to test that theory, and, spoiler alert, I don’t think it holds water. But it’s very complicated, involves lots of data, and I’m trying to gather it all together and present it in a meaningful way. (This is difficult, because I’m slow, in every way.) In the meantime, I want to share some of the things this gathering process is revealing. One thing: I’m getting a clearer idea of what Mike Miles and others mean when they say we need to teach “grit.”

I heard that term as it applies to education for the first time in Miles’ office a month or so back, and since then I hear it everywhere. It’s the new education buzzword. But I wasn’t sure what it meant. Did it mean we need to teach kids to be SUPER COMPETITIVE, as Drew Magary laments?

No. As the inventor of the “Grit Scale,” Angela Lee Duckworth, says, “Grit is passion and perseverance for long-term goals. … And working really hard to make that future a reality. Grit is living life like it’s a marathon and not a sprint.” (See her full TED talk on the subject of grit here. BTW: My score on her Grit Scale: 2.33 on a scale of 1 [low grit] to 5 [high grit]. ME = MEH GRITTY.)

So that gets me to the data. One of the reasons our adherence to testing and college readiness is so problematic is that it doesn’t measure grit. In other words, those metrics are good indicators of smarts (like IQ), but not great measures of outcomes/achievement. Which is why tracking six-year graduation rates (do our DISD high schoolers graduate with 2- or 4-year degrees within six years) is a much better measure of grit in students and success by our schools.

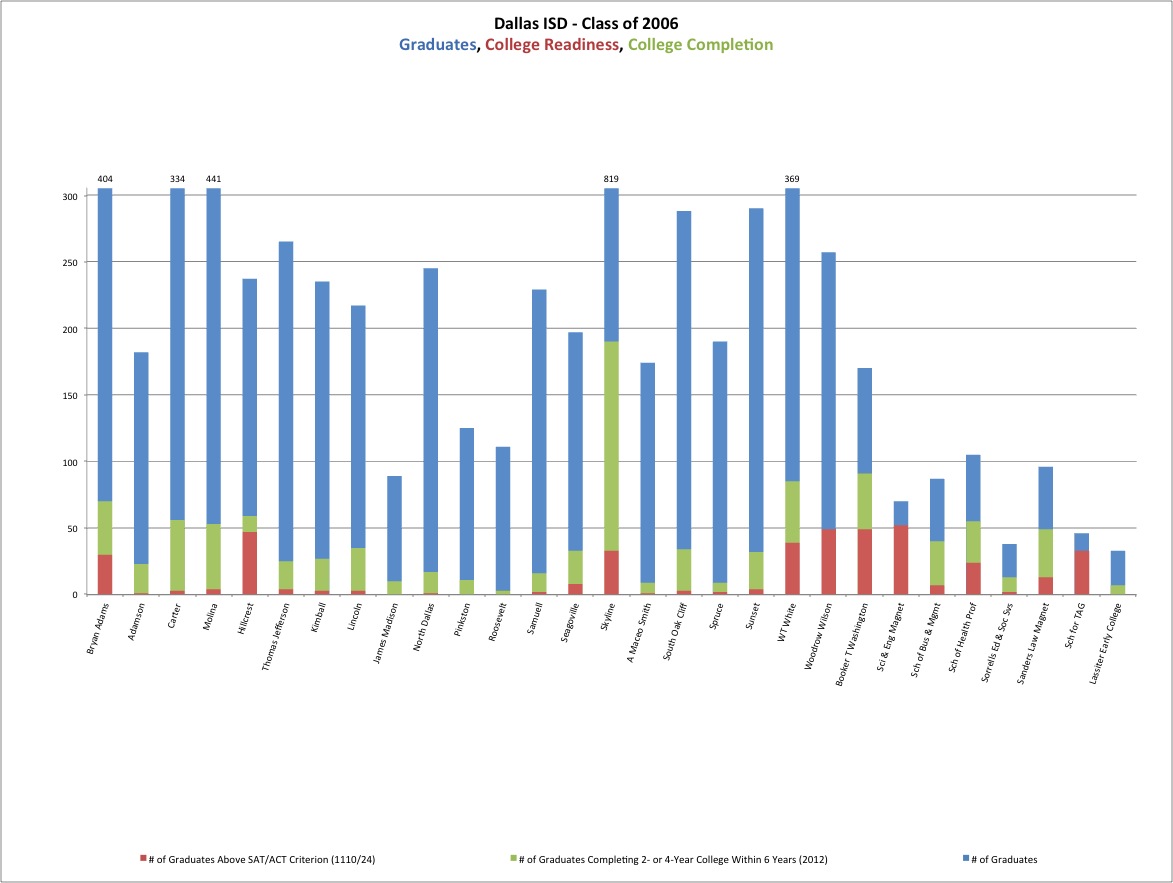

Which brings me to DISD high school performance in 2006. Here’s a chart below (I’ve uploaded the charts in Excel if you want to play with them there.) that shows DISD high schools’ total number of graduates (blue), how many of them tested as being “college-ready” (red), and how many of them graduated college by 2012 (green). Take a look at the chart below (click and click again to make-big):

Okay, see the green? That’s grit. Those are kids who do not score above 1110 SAT or 24 ACT, who nonetheless find the wherewithal to graduate college. Also: These are overwhelmingly poor kids with many fewer support systems available to them than you or I had.

And not to draw too much from one data set, but I think this also shows how different high schools can produce vastly different outcomes with basically the same sort of kids. Look at Skyline: 33 kids out of 819 graduates tested college ready (about 4 percent). But 190 kids from that class graduated with a 2- or 4-year degree in six years (about 23 percent). Those are the kids who had and/or were taught grit.

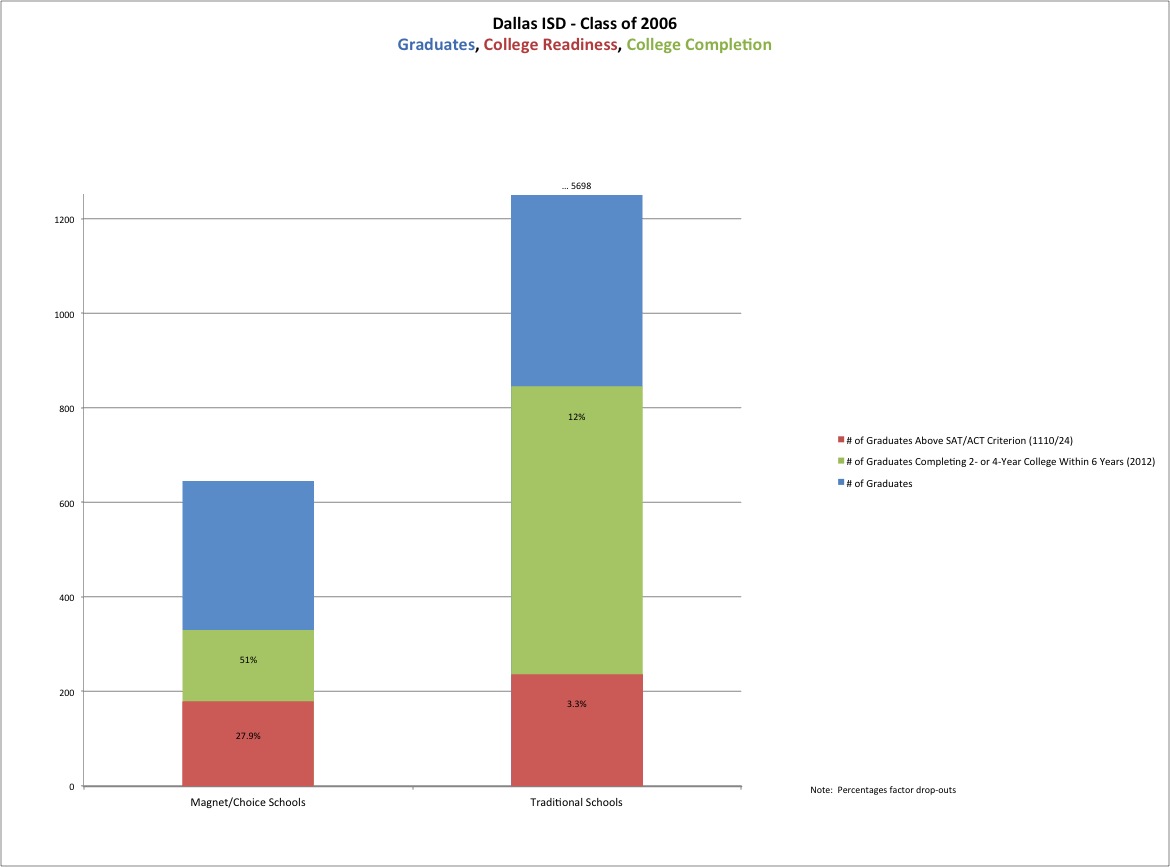

As you can see from the chart below (which combines this data for the whole district), many of those kids getting the best instruction at our magnet/choice schools also show a higher percentage of grit. (I’m assuming that, on the whole, the best schools have the best teachers. I know there are pockets of excellence throughout the district, but allow me this.) So 28 percent of our choice/magnet school grads were testing below college-readiness levels in 2006 but still got a degree by 2012. For all other high schools, that was true for just under 9 percent of 2006 graduates.

Without bogging down in the details of how I got the numbers (mix of TEA and data from here), I just wanted to give you a quick visual representation of these takeaways:

• College readiness, as defined by TEA’s cut-off for the SAT/ACT, shouldn’t be thought of as strictly predictive of college success. More kids are successful in college than are predicted to be on the SAT/ACT. That’s because they get to college, damn near fail out due to lack of preparation, but overcome the lack of preparation thru sheer tenacity. (Grit!) So it’s still useful to look at as a statement of aggregate K-12 preparation, not just how well those high schools are doing. Yes, it’s lousy in DISD across the board, but this can be thought of as a function of our poverty (which you can review in Holly Hacker’s story in the DMN).

• The count of college readiness is about the same (roughly 200 kids) between the magnet schools and the traditional schools even though there are far fewer kids in the magnet/choice schools. (Also remember that college readiness tracks to poverty. See the chart Matthew Haag at the DMN put together that showed you this.)

Understanding these things will become important when we compare DISD outcomes to charter schools. I’ll get to that later in the week.

PROBABLY. As we know, I’m not very gritty.